AI models have the potential to revolutionize patient care, diagnosis, and treatment outcomes. However, ensuring these models are accurate, up-to-date, and reliable is paramount. In this blog, we’ll explore three crucial techniques – Fine-Tuning, Retraining, and RAG (Retrospective Analysis and Gradual Update) – and their significance in advancing AI models within healthcare.

This customization often results in more precise and personalized responses, predictions, or forecasts. Healthcare AI models, particularly those based on deep learning, often require continuous adaptation to maintain their effectiveness. Here’s how these techniques play a pivotal role:

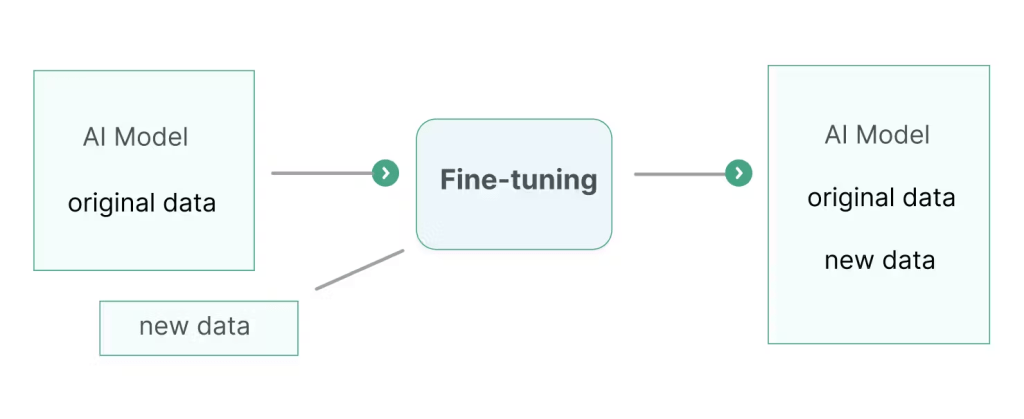

Fine-Tuning: Tailoring Models to Fit

Fine-tuning is akin to giving an AI model a specialized skill set. It involves taking a pre-trained model and adjusting its parameters to better perform on a specific task or dataset. Think of it as customizing a tool for a particular job rather than building it from scratch.

For instance, if you have a pre-trained language model like GPT (Generative Pre-trained Transformer), you can fine-tune it on domain-specific text data such as medical documents, legal texts, or customer support conversations. This process allows the model to learn domain-specific nuances and produce more accurate results.

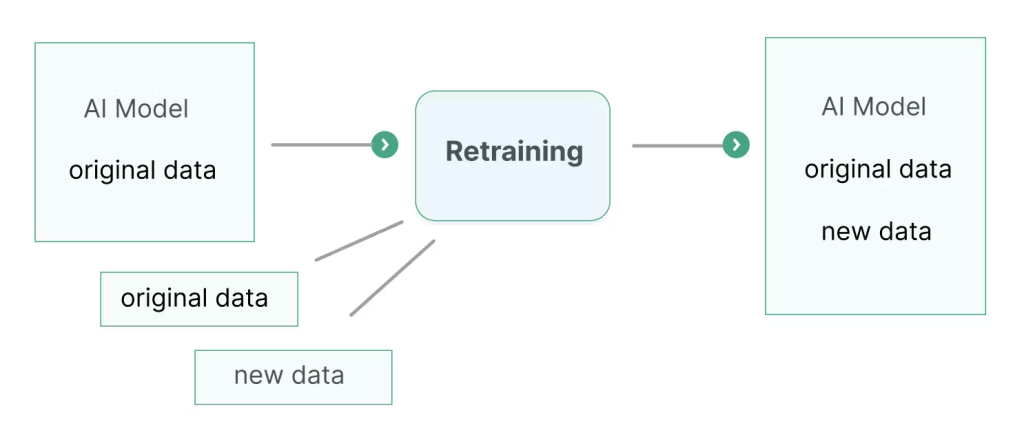

Retraining: Adapting to Changing Realities

Just as athletes need regular training to stay in peak condition, AI models benefit from retraining to stay relevant. Retraining involves updating a model with new data over time to ensure it remains effective as conditions change or new information becomes available.

For example, in applications like natural language processing or computer vision, retraining models periodically with fresh data helps them adapt to evolving language usage, new trends, or changes in the environment they operate in.

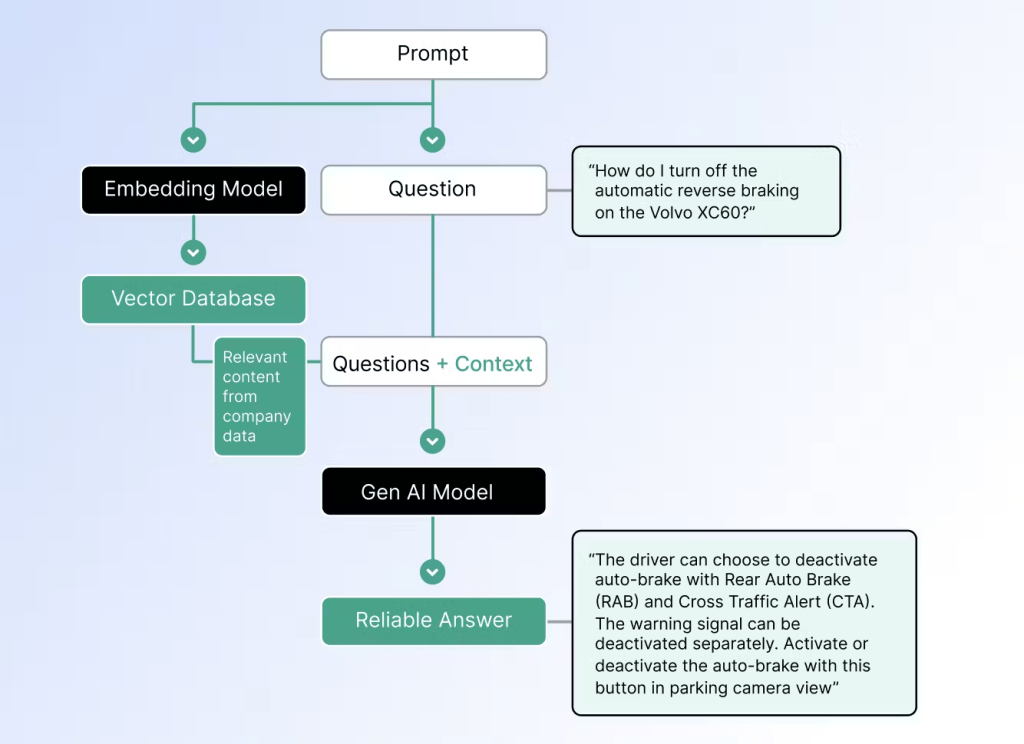

RAG (Retrieval-Augmented Generation): Broadening Horizons

RAG combines the power of information retrieval with generative models to produce more informative and contextually relevant responses. It’s particularly useful in tasks where context plays a crucial role, such as question answering or content generation.

In RAG, the model first retrieves relevant information from a large knowledge base in response to a query and then generates a response based on both the retrieved information and the query itself. This approach allows AI systems to generate more accurate and informative responses by leveraging external knowledge.

Enhancing AI Models for Real-World Applications

These techniques—fine-tuning, retraining, and RAG—play vital roles in enhancing AI models for real-world applications:

- Improved Performance: Fine-tuning allows models to excel in specific domains or tasks by adapting to the data they’ll encounter.

- Adaptability: Retraining ensures models stay accurate and relevant over time as new data becomes available.

- Contextual Understanding: RAG enhances AI systems’ ability to understand and generate contextually relevant responses by leveraging external knowledge.

Fine-tuning, retraining, and RAG are not just technical processes; they are fundamental strategies for ensuring the reliability, accuracy, and relevance of AI models in healthcare. As AI continues to permeate healthcare systems, these techniques will play a crucial role in enhancing patient care, enabling early diagnosis, and ultimately saving lives.